.webp&w=3840&q=75)

How ClickUp Enables Outcome-Based Project Management (Not Just Task Tracking)

🕓 February 15, 2026

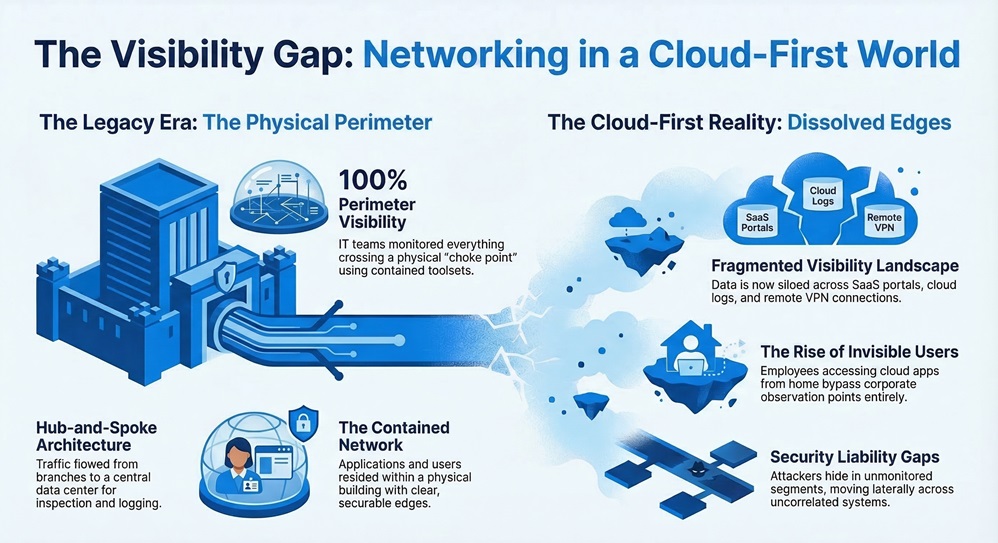

There was a time when enterprise network visibility meant walking into a data center and looking at a wall of blinking lights. Every application your organization depended on lived inside that building. Every user who needed access sat inside a corporate office connected to that building. The perimeter was physical. You could see it, secure it, and monitor everything crossing it from a relatively contained set of tools.

That model is gone. Today's enterprise network stretches across dozens of cloud services, hundreds of SaaS applications, remote employees working from home or traveling internationally, IoT devices on factory floors, branch offices in multiple countries, and partner connections that come and go as business relationships evolve. The applications your employees depend on most, your collaboration tools, your ERP system, your file sharing platforms, do not live inside a data center you control. They live somewhere in a public cloud, accessed over the public internet, from devices that may or may not be on a corporate network at any given moment.

This is what a cloud-first world actually looks like from a network operations perspective. And the challenge it creates for enterprise IT is not simply technical. It is operational. The tools, processes, and mental models that IT teams built their careers around were designed for a world where the network had clear edges. In a cloud-first environment, those edges have dissolved, and with them, a significant portion of the visibility and control that IT teams once took for granted.

Rebuilding that visibility and control in a way that works for the modern enterprise is one of the defining infrastructure challenges of this decade. This piece explains what has changed, where the gaps are, and how converged architectures are addressing the problem in ways that legacy tools fundamentally cannot.

Get Full Network Visibility Today

Understanding the current challenge requires being specific about what traditional network monitoring was built to do and where that design breaks down in a cloud-first environment.

Legacy network monitoring tools were designed around a hub-and-spoke model. Traffic flowed from branch offices back to a central data center or headquarters, where it was inspected, logged, and forwarded to its destination. This architecture made visibility straightforward. You placed your monitoring tools at the hub, and by definition, all traffic passed through your observation point. You could see everything because everything had to travel through the same choke point.

The cloud destroyed this architecture in two ways simultaneously. First, it moved the applications off-premises. When your critical business applications live in Microsoft Azure, AWS, or as SaaS platforms like Salesforce, forcing traffic to backhaul through a central data center before reaching those applications creates unnecessary latency and degrades performance. Organizations that tried to maintain the hub-and-spoke model for cloud applications quickly discovered that their employees found workarounds, introducing the Shadow IT problem that security teams still wrestle with today.

Second, the cloud distributed the users. Remote work, accelerated sharply by the events of 2020, permanently changed workforce geography. Users accessing cloud applications directly from home or from coffee shops do not pass through any corporate-controlled observation point at all. From the perspective of legacy monitoring tools, these users are effectively invisible.

The result is a fragmented visibility landscape. Different tools monitor different segments of the network. The firewall at headquarters sees traffic from users in the office. A separate VPN solution tracks remote access connections but has limited insight into what those users actually do once connected. Cloud workloads generate their own logs in formats that may not integrate cleanly with on-premises monitoring systems. SaaS applications produce usage data through separate vendor portals that IT must check independently. Each appliance at each branch location has its own monitoring interface with its own alerting logic.

This fragmentation is not just operationally inconvenient. It is a security liability. Visibility gaps are where attackers hide. When a threat actor moves laterally through a network, the stages of that movement will appear as isolated events in different monitoring systems. Without a unified view that correlates those events into a coherent narrative, the attack pattern may not be recognized until significant damage has already occurred.

Also Read: Troubleshooting Device-Based Firewall Rules in Cato SASE

To build a solution, it helps to be precise about what the gaps actually are.

Application visibility across cloud services. When employees use cloud applications, both sanctioned and unsanctioned, IT needs to understand which applications are in use, who is using them, what data is being transferred, and whether those applications meet the organization's security and compliance standards. Without a Cloud Access Security Broker (CASB) integrated into the traffic inspection path, this information is simply unavailable.

User activity visibility outside the corporate perimeter. A remote employee working from home is accessing cloud applications, transferring files, and communicating with external parties. If their traffic does not pass through a controlled inspection point, IT has no visibility into any of this activity. Legacy VPN solutions partially address this by tunneling traffic back through the corporate network, but at the cost of significant performance degradation that leads users to disable them.

Unified security event correlation. When security events occur across multiple systems, whether a firewall alert, a failed authentication attempt, an anomalous file download, or unusual outbound traffic, connecting those dots requires that the events exist in the same data repository and can be queried with a common tool. In a stack built from multiple vendor products, this correlation happens manually, slowly, and incompletely.

Branch network visibility without dedicated staff. Most branch locations do not have IT staff on site. When a performance issue or security event occurs at a branch, IT must diagnose it remotely using whatever telemetry the branch appliances provide. If those appliances each report to different management systems, diagnosis requires logging into multiple tools and manually correlating what they report.

IoT and operational technology visibility. Manufacturing environments, healthcare facilities, and retail locations increasingly depend on connected devices that were not designed with security or manageability in mind. These devices often cannot run security agents and may communicate over protocols that traditional monitoring tools do not inspect or log effectively.

See Your Entire Network From One Console

Rebuilding visibility in a cloud-first world requires a different architectural starting point. The tools cannot be added on top of a fragmented infrastructure and expected to produce unified insight. The infrastructure itself needs to be designed so that all traffic, from every user type and every location, passes through a common inspection and logging layer.

This is the architectural logic that drives the convergence of networking and security into a single cloud-native platform. When all traffic flows through the same globally distributed inspection layer, regardless of where the user is located or how they connect, the platform can observe and record everything in a common data repository. The visibility is inherent to the architecture rather than dependent on bolting together monitoring tools from different vendors.

In practical terms, this means a user connecting from a branch office, a remote worker connecting via a client application, and an unmanaged device connecting through a clientless web portal all pass through the same security inspection engines. The events generated by all three connection types are stored in the same place, queryable with the same tools, and subject to the same policy enforcement logic.

This architectural consistency is what makes genuine visibility possible. It also makes policy enforcement consistent, which is the other half of the control problem.

Also Read: Understanding Device Identification Limitations in Cato Device Inventory

Visibility without control is monitoring. Control without visibility is guesswork. Organizations need both, and in a cloud-first environment, achieving control requires the same architectural rethinking that visibility demands.

Control in the context of enterprise networking means several distinct things. It means being able to define who can access what resources under what conditions and having confidence that those policies are actually enforced consistently. It means being able to change policies quickly when business requirements change, new threats emerge, or compliance requirements are updated. And it means being able to verify that the controls in place are working as intended.

In a multi-vendor point solution environment, control is fragmented across management interfaces. A firewall policy change at headquarters does not automatically propagate to branch firewalls. An access policy defined for remote users through the VPN solution may not align with the access policies enforced at the network perimeter. When these gaps exist, the organization has the appearance of control without the substance.

Zero Trust Network Access (ZTNA) is the framework that most precisely describes what genuine access control looks like in a cloud-first environment. Rather than granting broad network access based on whether a user has successfully authenticated to a VPN, ZTNA enforces granular access decisions for every resource request, based on user identity, device health, location, and the specific resource being requested. A user who authenticates successfully does not automatically gain access to everything on the network. They gain access to the specific applications and data their role requires, nothing more.

Implementing ZTNA effectively requires the same architectural foundation as unified visibility. The access control decisions need to be made consistently across every connection type and enforced at a layer that sees all traffic. If ZTNA is implemented for remote users through a dedicated solution but does not apply to users inside branch offices, the organization has partial zero trust, which is another way of saying full trust for anyone who gets inside a building.

The convergence of networking and security into a unified cloud-native platform is the structural solution to fragmented visibility and inconsistent control. When all network traffic passes through a single platform with integrated security inspection, policy enforcement, and logging, the visibility and control gaps that exist in multi-vendor architectures are eliminated by design rather than patched by process.

From a visibility standpoint, a converged platform provides a single data repository for all network and security events. Network performance metrics, security alerts, application usage data, user activity logs, and policy enforcement records all exist in the same place. Monitoring and troubleshooting tools operate against this unified dataset. Correlating a security event with network performance data and user activity patterns takes seconds rather than the hours or days required to manually assemble the same picture from multiple vendor systems.

From a control standpoint, centralized policy management means that a policy change made in the management interface applies everywhere simultaneously. A new access policy for a sensitive application applies to users at headquarters, users in branch offices, and remote workers without requiring separate configuration changes in each system. Compliance with the policy can be verified from the same interface that was used to define it.

The management model can be adapted to the organization's preferences and capabilities. Teams that want direct control over all aspects of policy and configuration can operate the platform through a self-service interface. Organizations that prefer to delegate some or all management responsibilities can work with the provider or a managed service partner. In either case, the underlying visibility into network and security events remains comprehensive and consistent.

The manufacturing sector demonstrates the practical stakes of visibility and control in a particularly clear way. Smart factory environments connect operational technology systems, robotics, sensors, and traditional IT infrastructure into a single network fabric. Many of the connected devices in these environments were designed for function rather than security. They cannot run agents, they communicate over specialized protocols, and they represent significant attack surface if compromised.

A converged SASE platform that inspects all traffic passing through the network, regardless of the protocol or the device type, provides visibility into this OT environment that point solutions cannot match. Security events generated by unusual device behavior can be correlated with network traffic anomalies and user activity patterns, giving security teams the context they need to distinguish legitimate operational activity from a potential compromise.

In retail environments with hundreds of distributed locations, the ability to monitor network performance and security events across all locations from a single interface changes what is operationally possible for IT teams. Performance degradation at a specific store becomes visible in real time without requiring IT staff on site. Security events at any location trigger alerts in the same system used to monitor all other locations, enabling faster response without proportionally larger IT headcount.

The cloud-first world did not just change where applications live. It fundamentally changed the relationship between enterprise IT and the network those applications depend on. The perimeter that once defined the boundary of what IT needed to see and control has been replaced by something far more distributed and complex.

Legacy approaches to visibility and control were not designed for this environment. Monitoring tools that watch a data center perimeter cannot see users working from home. Firewalls at branch locations report to their own management systems, separate from the VPN solution, separate from the cloud workload monitoring, separate from the SaaS application logs. The result is a patchwork of partial insight where the gaps are exactly the places an attacker will look to operate undetected.

Rebuilding visibility and control in this environment requires starting from a different architectural premise. When all traffic passes through a unified, cloud-native inspection layer, visibility becomes comprehensive by design. When policy management is centralized, control becomes consistent by design. These are not features that can be achieved by adding more tools to a fragmented stack. They require a platform that was built to handle the realities of a cloud-first world from the ground up.

Organizations that make this architectural shift find that their IT teams spend less time chasing down events across disconnected systems and more time using the insight they have to make better security and operational decisions. That is what visibility and control in a cloud-first world is supposed to look like, and it is achievable today for organizations willing to rethink the infrastructure that delivers it.

Take Control of Your Cloud-First Network

Integration between point solutions is possible but produces incomplete results. Each vendor's data model, log format, and alerting logic differs. Integration projects require ongoing maintenance as each vendor updates their product, and the correlation capabilities are limited by what each tool was designed to export. Unified visibility is an architectural property, not an integration project outcome.

No. Control over policy definition, configuration, and access management remains with IT. What changes is that the platform infrastructure, the servers, software, and global network that enforcement policies run on, is maintained by the provider. This is comparable to how organizations use cloud services for applications without losing control over how those applications are configured and used.

Traditional VPN grants authenticated users broad network access, creating visibility challenges because those users can reach a wide range of resources. ZTNA enforces granular access decisions for each resource request, which simultaneously limits lateral movement risk and generates more precise access logs. IT can see exactly which resources each user accessed and when, rather than simply knowing that a user was connected to the network.

Application visibility, delivered through CASB capabilities integrated into the traffic inspection layer, allows IT to see which cloud applications employees are using, classify them as sanctioned or unsanctioned, and apply controls to specific actions within those applications. This addresses the Shadow IT problem and helps organizations maintain data governance as employees adopt new cloud tools.

Policy changes made in a centralized management interface apply across all locations and users in real time. There is no need to log into individual branch devices or coordinate separate update processes across multiple vendor systems. The change propagates through the platform's global infrastructure automatically.

Surbhi Suhane is an experienced digital marketing and content specialist with deep expertise in Getting Things Done (GTD) methodology and process automation. Adept at optimizing workflows and leveraging automation tools to enhance productivity and deliver impactful results in content creation and SEO optimization.

Share it with friends!

share your thoughts