.webp&w=3840&q=75)

How ClickUp Enables Outcome-Based Project Management (Not Just Task Tracking)

🕓 February 15, 2026

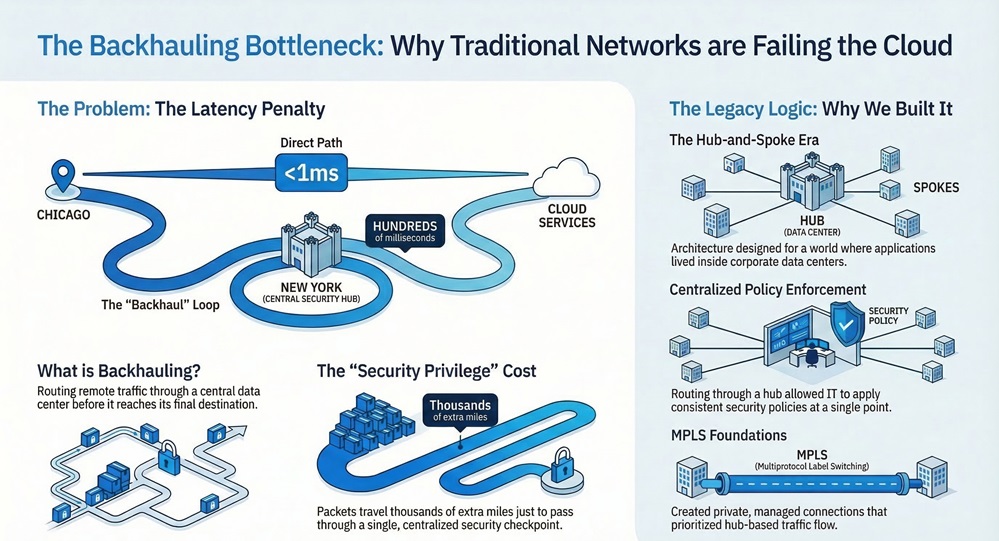

Picture a branch office employee in Chicago who needs to open a document stored in Microsoft SharePoint. The SharePoint environment is hosted in Microsoft's cloud infrastructure, which has a regional presence less than a millisecond away from that employee's physical location. In a rationally designed network, the request would travel directly from the employee's device to the nearest Microsoft cloud node, retrieve the document, and return it in moments.

Now picture what actually happens in thousands of enterprise networks built on traditional architecture. That same request travels from Chicago to the company's headquarters data center in New York. The data center inspects the traffic, applies security policies, and then forwards the request out to Microsoft's cloud. The response travels back from Microsoft to New York, gets inspected again, and then returns to Chicago.

A connection that geography suggests should take milliseconds now takes hundreds of milliseconds, because every packet traveled thousands of additional miles for the privilege of passing through a security checkpoint that was designed for a completely different network topology.

This is backhauling. It is one of the most consequential performance problems in enterprise networking today, and it exists not because network engineers made poor decisions, but because the architecture that makes backhauling necessary was designed for a world where applications lived inside corporate data centers and users always sat inside corporate offices. Neither of those conditions reliably holds true anymore.

Understanding what backhauling is, where it came from, what it costs, and why the infrastructure model that enabled it is being systematically replaced is essential context for any organization evaluating its network and security strategy.

Backhauling, in enterprise networking, refers to the practice of routing traffic from remote locations, branch offices, or end users through a centralized corporate data center or headquarters before that traffic reaches its intended destination. The traffic does not take the most direct path available. It takes a longer path that passes through a controlled observation and enforcement point.

The mechanism that made backhauling standard practice for decades was MPLS, or Multiprotocol Label Switching. MPLS creates private, managed network connections between sites, delivering predictable performance and quality of service across dedicated circuits. Organizations built hub-and-spoke network topologies using MPLS, where branch offices connected to a central hub, and all traffic, including traffic destined for the internet or cloud services, was routed through that hub for inspection and policy enforcement.

This architecture made complete sense in its original context. When every application the business depended on lived inside the data center at headquarters, routing all branch traffic through headquarters meant users always had a direct path to the applications they needed. The network topology matched the application topology. Security policies could be applied consistently at a single, controlled point. IT had full visibility into everything crossing the network because everything crossed through the same place.

The model worked. Then cloud happened.

Eliminate Backhauling From Your Network

The shift to cloud-delivered applications and services did not happen overnight, but its cumulative effect on network architecture has been dramatic. Consider what a typical enterprise application portfolio looks like today compared to a decade ago.

Email, once hosted on Exchange servers inside the corporate data center, now runs on Microsoft 365 in Microsoft's cloud. Customer relationship management, once managed on on-premises Salesforce or Oracle installations, is now accessed as a SaaS service over the internet. Collaboration tools like Microsoft Teams, Zoom, and Slack are entirely cloud-native, with no on-premises component at all. Storage and file sharing services like SharePoint, OneDrive, and Google Drive live in public cloud infrastructure optimized for direct internet access.

When these applications move to the cloud, the data center loses its role as the logical hub of network traffic. The applications are no longer there. But in organizations that maintained their hub-and-spoke MPLS architecture, the network topology did not change to reflect this reality. Branch traffic continued to route through headquarters even though the applications it was trying to reach were no longer at headquarters. It was heading to the cloud, via headquarters, a routing decision that added latency, consumed MPLS bandwidth, and degraded the performance of the very applications the organization was paying cloud premiums to access.

The latency impact is not theoretical. Real-time collaboration tools like video conferencing are particularly sensitive to network latency and packet loss. When a Teams or Zoom session travels from a branch office to a data center and back out to Microsoft or Zoom's cloud infrastructure, users experience audio degradation, video freezing, and dropped connections. These are not IT problems that users tolerate quietly. They affect productivity, create frustration, and eventually lead employees to find workarounds that introduce security risks.

The bandwidth problem compounds the performance issue. MPLS circuits are expensive, and they have fixed capacity. When cloud-bound traffic consumes MPLS bandwidth, it competes with genuinely internal traffic that legitimately needs to travel between branches and headquarters. Organizations that maintained hub-and-spoke topologies as cloud adoption grew found themselves upgrading MPLS capacity repeatedly, paying significant cost increases to route traffic through a data center that the destination application had already left behind.

Also Read: Autonomous Platform Life Cycle Management: How SASE Is Redefining Enterprise Network Operations

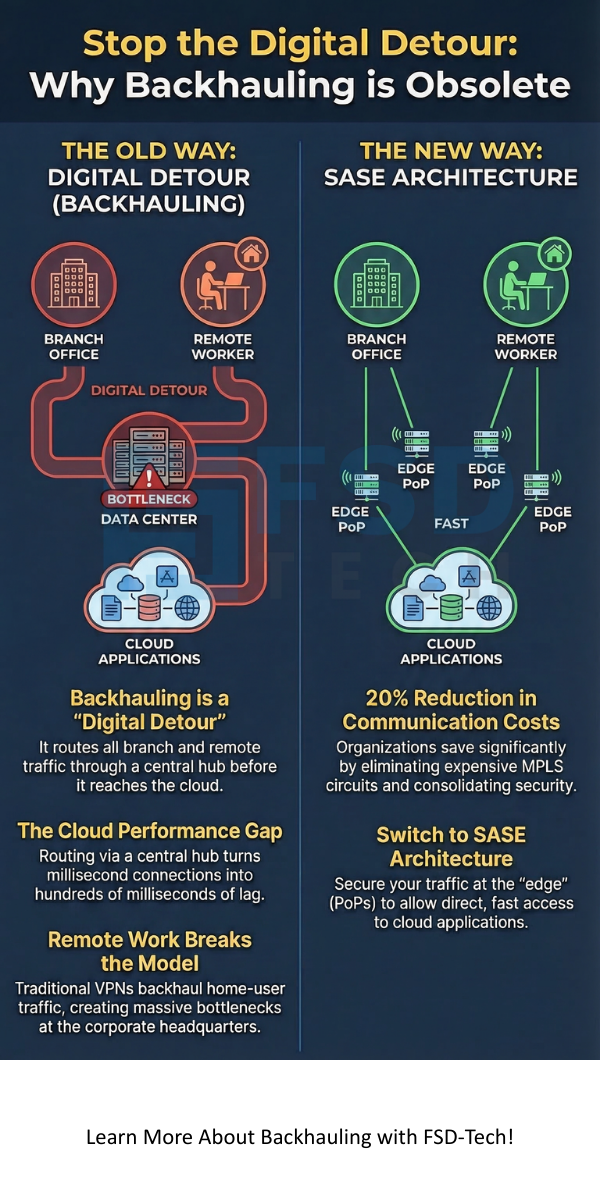

If cloud adoption strained the backhauling model, the permanent shift to distributed remote work broke it entirely. In a hub-and-spoke network, the implicit assumption is that users are at branch locations connected to the corporate network via managed circuits. Remote workers do not have MPLS connections from their homes. They connect over the public internet, typically through VPN solutions that are themselves a form of backhauling.

Traditional remote access VPN works by tunneling all of a remote user's traffic through a VPN concentrator located at corporate headquarters or a data center. From there, traffic is inspected and forwarded to its destination. This approach has the same fundamental problem as MPLS backhauling: it routes traffic through a central location that adds latency and consumes centralized infrastructure capacity, regardless of where the destination actually is.

When remote worker traffic is destined for cloud applications, VPN backhauling creates the worst possible routing outcome. The user is at home, perhaps physically located near a cloud provider's regional infrastructure. Their traffic travels over the public internet to the VPN concentrator at headquarters, from there to the cloud application, and then back through the same path in reverse. The performance degradation relative to a direct connection can be severe, particularly for real-time applications.

The capacity problem becomes critical when large numbers of employees work remotely simultaneously. VPN concentrators are hardware devices with fixed processing limits. When user volumes spike, they become bottlenecks. Organizations that were caught with inadequate VPN capacity during sudden transitions to remote work experienced connectivity failures at exactly the moment reliable remote access was most critical to business continuity.

See How SASE Delivers Direct Cloud Access

The reason backhauling became standard practice was not purely about network topology. It was about security. By routing all traffic through a central point, IT could apply a comprehensive security stack, firewalls, intrusion prevention systems, web filtering, and malware inspection, to everything crossing the network. Branch offices did not need their own security infrastructure because the hub handled it centrally.

This was a legitimate and effective security architecture when the traffic patterns matched the model. The problem is that security applied at a central hub only works if all the traffic you care about actually passes through that hub. In a cloud-first, remote-work environment, significant volumes of traffic never touch the corporate hub at all. A remote worker accessing cloud applications directly bypasses the entire on-premises security stack. A branch that has been given direct internet access to reduce MPLS costs loses the security inspection that justified routing through headquarters.

The alternative that many organizations adopted was split tunneling, where only traffic destined for internal corporate resources travels through the VPN while internet-bound traffic goes directly. This resolves the performance problem but creates an even larger security gap. Traffic that does not pass through the central inspection point is uninspected, unlogged, and ungoverned.

The security argument for backhauling assumed that applying comprehensive security at the hub was better than not applying it at all. In a cloud-first environment, that assumption requires a trade-off between performance and security that no organization should have to make. The emergence of modern architectures that deliver security at the point where traffic actually flows makes this trade-off unnecessary.

The architectural alternative to backhauling is direct-to-cloud connectivity with security enforcement distributed to the point where traffic enters and exits the network. Instead of routing traffic through a central hub for inspection, the security stack travels with the traffic to wherever it flows.

In a SASE architecture, this is achieved through a globally distributed network of Points of Presence, each of which runs the full security inspection stack. Traffic from a branch office connects to the nearest PoP, receives comprehensive security inspection, including firewall enforcement, intrusion prevention, malware detection, web filtering, and data loss prevention, and then egresses to its destination from that PoP. The traffic takes the shortest path to its destination while still passing through a full security inspection layer.

For cloud-bound traffic, this means the security PoP is geographically close to both the user and the cloud service they are accessing. Latency is minimized, performance matches what direct internet access would provide, and security is not compromised. For users accessing private applications at headquarters or in a data center, traffic is routed optimally over a global private backbone, with quality of service policies applied to prioritize business-critical applications.

Remote users benefit from the same architecture. Instead of tunneling traffic to a VPN concentrator at headquarters, a remote worker's client connects to the nearest SASE PoP. Security inspection happens at that PoP. Cloud applications are accessed directly from that PoP with full security coverage. Private applications are accessed via the global backbone with the same security policies that apply to any other connection type. Performance is optimized regardless of where the user is located or what they are accessing.

The practical difference in user experience is significant. Real-time collaboration tools function without the degradation that backhauling introduces. File transfers to cloud storage complete faster. Web browsing is more responsive. Users who previously worked around VPN performance problems by disabling it or using personal devices have less incentive to do so because the compliant path is also the fast path.

Also Read: SASE for Industry 4.0: Securing the Future of Connected Manufacturing

Beyond performance, backhauling carries direct financial costs that organizations often underestimate because they are distributed across multiple line items in the IT budget.

MPLS circuits are among the most expensive connectivity options available, priced at a significant premium over commodity broadband for equivalent bandwidth. Organizations that route large volumes of cloud-bound traffic over MPLS are paying premium circuit costs to carry traffic whose destination has already moved off the network those circuits were built to serve.

VPN concentrator hardware requires procurement, maintenance, and periodic replacement. Capacity upgrades require purchasing new hardware and scheduling deployment. When user volumes grow faster than planned, emergency hardware procurement at premium prices becomes necessary.

The data center infrastructure that hosts the central security stack, whether physical appliances or virtual machines running security functions, represents ongoing capital and operational expenditure. Keeping that stack current requires regular hardware refreshes and software licensing. The personnel time required to manage, configure, and troubleshoot it all represents a recurring operational cost that rarely appears on a single line item but accumulates to significant totals when measured comprehensively.

Organizations that have moved away from backhauling architectures consistently report reductions in total communication and infrastructure costs. One global manufacturer reported a 20% reduction in communication costs after replacing MPLS-based backhauling with a cloud-native SASE platform. The savings came from eliminating expensive MPLS circuits for cloud-bound traffic, reducing VPN infrastructure requirements, and consolidating the security stack that had previously required separate hardware at each location.

Backhauling is not obsolete because someone decided it was a bad idea. It is obsolete because the conditions that made it sensible no longer exist at the scale required to justify it.

Applications have left the data center. Users have left the office. The traffic patterns that hub-and-spoke architecture was built to serve have fundamentally changed. Maintaining a network architecture organized around routing all traffic through a central hub when neither the applications nor the users are reliably at that hub anymore means accepting performance degradation, excessive costs, and incomplete security as permanent operating conditions.

The organizations that are moving away from backhauling are not doing so because a technology vendor told them to. They are doing so because the business impact of maintaining an architecture misaligned with their actual traffic patterns has become impossible to ignore. Employees complain about application performance. IT teams spend disproportionate time managing capacity constraints. Security gaps created by traffic that bypasses the hub remain unaddressed. Cloud investments underperform because the network delivers their traffic inefficiently.

Modern architectures that deliver security where traffic actually flows, at globally distributed inspection points close to users and cloud services, resolve all of these problems simultaneously. They are not a workaround for backhauling's limitations. They are a replacement for the underlying model that made backhauling necessary in the first place.

Backhauling was a rational response to a specific set of network and application conditions. When data centers were the center of gravity for enterprise applications and all users worked inside corporate offices, routing traffic through a controlled central hub for security inspection was both logical and efficient. The model served its purpose well for many years.

The cloud-first, distributed-workforce environment that defines enterprise IT today has made those original conditions obsolete. Applications have moved to the cloud. Users are everywhere. The traffic patterns that backhauling was designed to manage have shifted so fundamentally that maintaining a hub-and-spoke routing model now means deliberately making the network worse than the available infrastructure would allow.

The performance costs are measurable in latency metrics and employee productivity. The financial costs appear in MPLS circuit bills, VPN hardware budgets, and data center operational expenses that grow without delivering proportional business value. The security costs are visible in the gaps created when traffic bypasses central inspection points entirely.

Replacing backhauling is not simply a network refresh. It is an architectural realignment that brings the network topology into alignment with the actual patterns of modern enterprise work. Distributing security enforcement to where traffic flows, rather than routing traffic to where security enforcement sits, resolves the performance, cost, and security problems simultaneously. That is why backhauling is not just struggling. It is structurally obsolete, and the organizations that recognize this earliest will operate faster, more secure, and more cost-effective networks than those that continue maintaining infrastructure designed for a world that no longer exists.

Fix Your Network Performance Issues Today

In networks where all critical applications live inside a corporate data center, backhauling through that data center adds minimal unnecessary latency because the data center is the destination. The performance problem becomes severe when applications have moved to cloud services that are not at the data center, turning the hub into an unnecessary detour rather than a logical routing destination.

Adding MPLS capacity addresses the bandwidth constraint temporarily but does not fix the underlying routing inefficiency. Traffic still travels to the hub before reaching cloud destinations, regardless of how much bandwidth the MPLS circuit provides. The latency added by geographical detour is not resolved by faster circuits.

Split tunneling routes only traffic destined for internal corporate resources through the VPN while allowing internet-bound traffic to go directly. This improves performance for cloud-bound traffic but creates security gaps because that traffic bypasses the central inspection stack entirely. SASE solves the problem properly by applying security at the point of internet egress rather than requiring a trade-off between performance and security coverage.

In a SASE architecture, security inspection is distributed across a global network of PoPs. All traffic passes through the nearest PoP for comprehensive security inspection before reaching its destination. The security stack is not eliminated by moving away from backhauling. It moves with the traffic to wherever that traffic flows.

Migration timelines vary based on the size and complexity of the organization's existing infrastructure. Modern SASE platforms support gradual migration, allowing organizations to transition site by site without full cutover. Branch locations can be connected to the SASE platform while existing MPLS circuits remain in place during transition, reducing risk and allowing the organization to validate performance improvements before completing the migration.

Surbhi Suhane is an experienced digital marketing and content specialist with deep expertise in Getting Things Done (GTD) methodology and process automation. Adept at optimizing workflows and leveraging automation tools to enhance productivity and deliver impactful results in content creation and SEO optimization.

Share it with friends!

share your thoughts