.webp&w=3840&q=75)

How ClickUp Enables Outcome-Based Project Management (Not Just Task Tracking)

🕓 February 15, 2026

Scaling service mesh is the secret to keeping your microservices fast and reliable as your traffic hits new peaks. We've all been there: your app is growing, you add more services, and suddenly, things feel a bit laggy. You might wonder, "Is the network holding us back?" It’s a common hurdle, but once you get the hang of how the data plane and control plane interact, you’ll see the path forward.

When we talk about a service mesh (SM), we're looking at a dedicated layer that handles how your services talk to each other. It takes the heavy lifting of security, traffic, and "observability" off your developers' plates. But as you add hundreds of proxies, the mesh itself needs to grow.

Have you ever noticed your latency creeping up as you add more features?

That’s often because the mesh needs a bit of tuning. Scaling service mesh isn't just about adding more power; it’s about making the system smarter.

In my experience, many teams treat the mesh like a "set it and forget it" tool. That’s a mistake. A service mesh uses a "sidecar" pattern where a small proxy (like Envoy) sits next to every service.

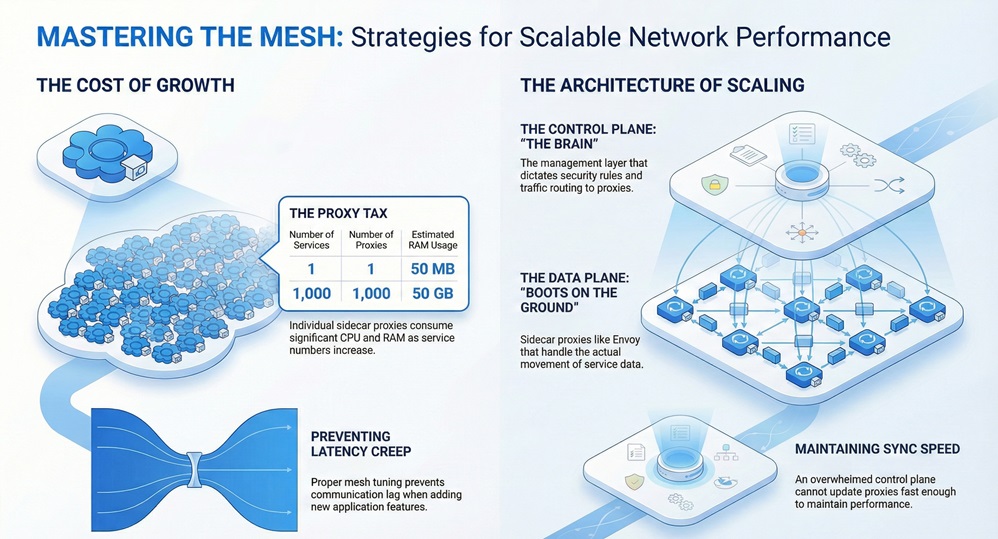

As you scale, these sidecars consume CPU and memory. If you have 1,000 services, you have 1,000 proxies. If each one uses 50MB of RAM, you’re looking at 50GB just for the network layer! That’s why we need to focus on efficiency.

To scale properly, we have to look at two main parts:

If the brain (control plane) gets overwhelmed, it can't update the proxies fast enough. If the proxies (data plane) are too heavy, they slow down your app.

How do we actually make this work in the real world? It comes down to a few key moves.

1. Optimize the Sidecar Configuration

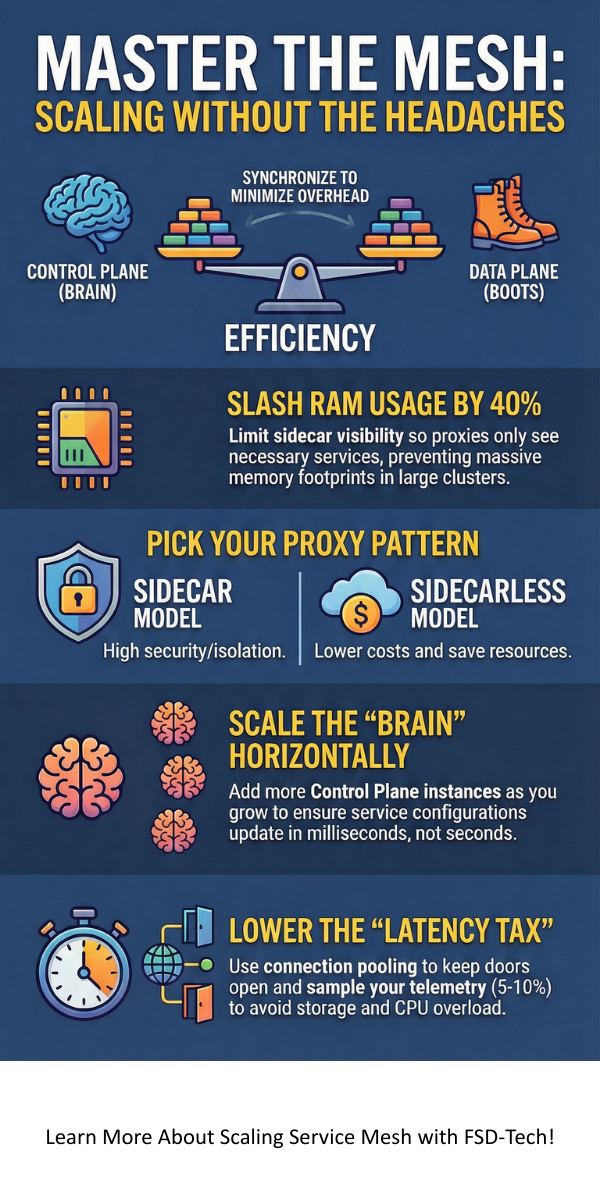

By default, many SM tools send the entire cluster's map to every single proxy. Does a "Payment Service" really need to know about the "Image Resize Service"? Probably not.

To keep things light, you should use "Sidecar Resources" to limit what each proxy sees. This reduces the memory footprint significantly. In fact, we've seen cases where this simple change cut memory usage by 40%.

2. Choose the Right Architecture: Sidecars vs. Sidecarless

There is a big debate right now about "sidecarless" mesh (like Istio’s Ambient Mesh). Instead of putting a proxy in every pod, you use a shared proxy for the whole node.

Which one fits your needs? If you're running on a tight budget, the sidecarless approach might be your best friend.

3. Manage the Control Plane Load

As your cluster grows, the control plane has to work harder to push updates. You can scale the control plane horizontally. This means adding more "brains" to handle the load. This ensures that when a new service starts, it gets its configuration in milliseconds, not seconds.

Also Read: Autonomous Mesh Networking: For Smart Connectivity

Here’s the thing: scaling isn’t just a technical task; it’s a strategy. We’ve all seen clusters crash because a single update flooded the network. Here is how we avoid that.

Use Namespace Isolation

Don't let every service talk to every other service. Use namespaces to group related tasks. This creates smaller "islands" within your mesh, making it much easier to manage and scale.

Implement Connection Pooling

Opening and closing connections is expensive for a CPU. By using connection pooling, the mesh keeps connections open and reuses them. It's like keeping a door open for a crowd instead of locking and unlocking it for every person.

Monitor Your Overhead

You can't fix what you don't measure. Use tools like Prometheus to track how much CPU your proxies use. If you see a spike, it’s time to check your "load balancing" settings.

Pro Tip: Always look at the "99th percentile" latency. It tells you the worst-case scenario for your users, which is usually where the scaling issues hide.

Also Read: Mesh Architecture: How to Decentralize Your Data

Scaling isn't always smooth sailing. You will likely hit a few bumps.

Latency "Tax"

Every time data passes through a proxy, it adds a tiny bit of time. While 1ms sounds small, it adds up if your request passes through five different services.

Configuration Complexity

The more you scale, the more YAML files you have to manage. This is where "GitOps" comes in handy. It helps you automate the changes so you don't make a manual typo that brings down the site.

Telemetry Overload

A service mesh generates a lot of data. Logs, traces, and metrics can quickly fill up your storage. We recommend "sampling" your traces. You don't need to record 100% of every click; 5% or 10% is usually enough to see the big picture.

Scaling service mesh is a journey, not a destination. As your business grows, your network needs to be flexible enough to grow with it. By focusing on smart configurations, choosing the right proxy model, and keeping a close eye on your metrics, you can build a system that is both powerful and efficient.

At our core, we believe in building tech that empowers people. We’re here to help you navigate these complex waters so you can focus on what really matters: delivering value to your customers. We've helped dozens of clients turn their messy networks into sleek, high-performing machines, and we're ready to do the same for you.

Ready to streamline your network? Let’s talk about how we can optimize your infrastructure today.

It adds a very small amount of latency. However, the benefits of security and better traffic control usually outweigh that tiny delay. Proper scaling and tuning can keep this "tax" very low.

You should look at scaling once you hit about 50 to 100 microservices. Before that, the standard settings usually work fine. Once you go bigger, you'll notice the resource usage start to climb.

No, there are many options like Linkerd, Consul, and Kuma. Linkerd is known for being very light and fast, which makes it a great choice if you are worried about the “latency tax.”

Surbhi Suhane is an experienced digital marketing and content specialist with deep expertise in Getting Things Done (GTD) methodology and process automation. Adept at optimizing workflows and leveraging automation tools to enhance productivity and deliver impactful results in content creation and SEO optimization.

Share it with friends!

share your thoughts