.webp&w=3840&q=75)

How ClickUp Enables Outcome-Based Project Management (Not Just Task Tracking)

🕓 February 15, 2026

Every enterprise network is a living system. It needs constant feeding: patches, capacity upgrades, configuration changes, hardware refreshes, and security stack updates. For decades, IT teams accepted this reality as simply part of the job. Someone had to log into every appliance, verify firmware versions, test compatibility before deploying updates, and coordinate maintenance windows that inevitably disrupted business hours.

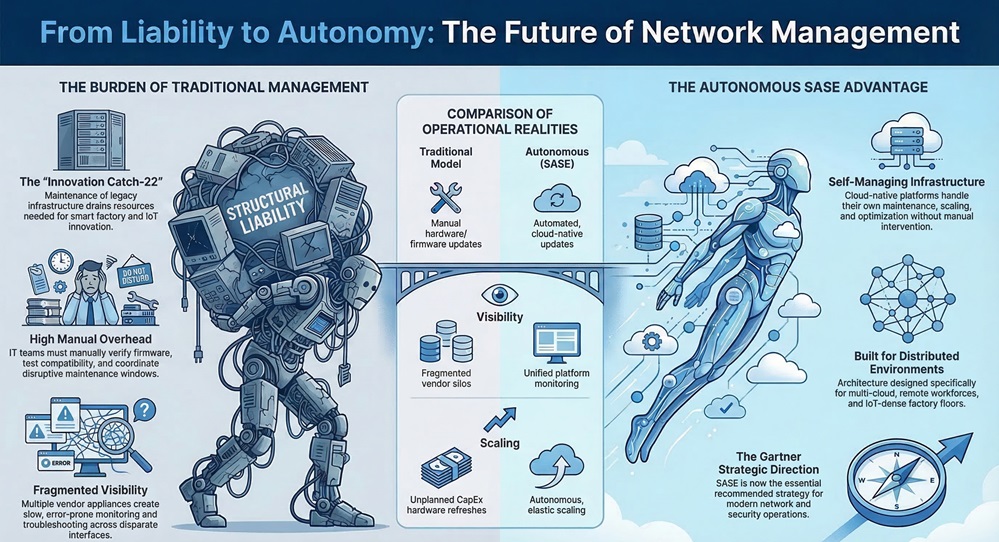

The problem is that this model was designed for a different era. When every user sat inside a corporate office, connected to on-premises applications, the scope of what needed managing was finite and predictable. That world no longer exists. Organizations now operate across distributed branch locations, multi-cloud environments, remote workforces, and IoT-dense factory floors. Managing the life cycle of a network platform in this environment using traditional methods is no longer a challenge. It is a structural liability.

Autonomous Platform Life Cycle Management refers to the capability within a modern cloud-native platform to handle its own infrastructure maintenance, scaling, updating, and optimization without requiring manual intervention from IT. This concept sits at the heart of why Secure Access Service Edge (SASE) architecture has become the strategic direction recommended by Gartner for enterprise networking and security. Understanding how it works, why it matters, and what it changes for IT operations is increasingly essential for any organization planning its network and security strategy.

Before examining the autonomous model, it is worth being specific about what organizations absorb when they manage network and security infrastructure through conventional point solutions.

Hardware appliances at every branch location each follow their own life cycle. A firewall bought in year one will reach end-of-support in year five or six, requiring a hardware refresh. An SD-WAN appliance might hit processing limits before its scheduled replacement cycle if user volumes spike or new security capabilities like TLS inspection require additional compute. Each of these events triggers unplanned capital expenditure.

Beyond hardware, the software layer demands continuous attention. Security signatures need daily updates. Firmware must be tested against existing configurations before deployment. New features require software upgrades that carry downtime risk. Organizations running multiple point solutions across their network stack face an environment where each vendor operates on its own update cadence, creating a constant stream of maintenance activity that consumes IT resources without directly advancing any business objective.

The manufacturing sector illustrates this particularly well. Lean IT teams in manufacturing environments often spend the majority of their time maintaining legacy infrastructure rather than supporting digital transformation initiatives like smart factory deployments or IoT integration. This creates a catch-22: the more complex the infrastructure, the more IT time it consumes, leaving less capacity to support the innovation that would reduce dependence on that infrastructure.

Visibility compounds the problem further. In a stack built from multiple vendor appliances, each device has its own monitoring interface, its own log format, and its own alerting logic. Correlating a security event across a NGFW, a separate IPS appliance, and a cloud access security broker from different vendors is slow and error-prone. Troubleshooting a performance issue across that same stack requires expertise in each product individually.

See How SASE Automates Your Network

Autonomous Platform Life Cycle Management is not a feature that can be bolted onto a legacy architecture. It requires a specific structural foundation: a cloud-native, globally distributed platform where all networking and security capabilities run in software on shared infrastructure managed by the provider.

In a SASE architecture, traffic from every location, whether a physical branch, a cloud data center, or a remote user, connects to the nearest Point of Presence (PoP) operated by the SASE provider. At that PoP, a converged stack processes the traffic through networking optimization and the full security inspection pipeline within a single pass. Because the entire platform runs as software in the provider's cloud infrastructure, every upgrade, every new capability, and every scaling event happens at the platform level, not at the customer's individual deployment.

This is the structural shift that enables autonomous life cycle management. When the platform is the product rather than the appliance, the provider controls the complete stack. They can push security signature updates globally within minutes. They can add new capabilities and make them available to every customer simultaneously without requiring a hardware refresh or a maintenance window. When compute demand increases because an organization adds users or enables TLS inspection across more traffic, the cloud infrastructure scales elastically to meet that demand.

The customer's network does not experience this as a maintenance event. It simply works at higher capacity with newer capabilities.

Also Read: Vendor Consolidation: Why SASE is the Future of IT

In a traditional model, keeping the security stack current is a constant operational burden. A vulnerability disclosed on Monday may require emergency patching across dozens of branch firewalls, each requiring a maintenance window, a configuration backup, and post-update testing. In a cloud-native SASE platform, the provider handles this at the infrastructure layer. The customer's security posture benefits from the update without any IT involvement.

This matters significantly for industries with compliance obligations. A retail organization running point-of-sale systems across hundreds of locations cannot afford the window of exposure created by slow, manual patching cycles. A pharmaceutical company where a ransomware compromise could have life-safety consequences needs security updates deployed in hours, not weeks.

Branch appliances are bounded by their physical specifications. A device with a fixed processing capacity will eventually reach its ceiling, particularly as security capabilities like full TLS inspection consume more compute resources than basic packet filtering. When that ceiling is reached, the organization faces an unplanned appliance refresh, which represents both capital expenditure and deployment effort.

Cloud-native platforms are not subject to these constraints. Elastic infrastructure scales dynamically to meet demand. An organization that doubles its remote workforce does not need to purchase more hardware. An organization that enables a new security capability does not need to resize its branch devices. The platform accommodates both scenarios through automatic resource allocation.

Network reliability in a traditional architecture depends on redundancy configurations that IT teams design, deploy, and test manually. High-availability failover scenarios require pre-planned configurations and periodic validation. When a PoP or network path becomes unavailable in a SASE architecture, the platform's self-healing mechanisms automatically detect the degradation and route traffic to the next available PoP. The application layer remains unaffected. No manual intervention is required, and no pre-planned failover configuration needs to be maintained by IT staff.

One of the compounding costs in multi-vendor environments is policy fragmentation. Security policies defined at the perimeter firewall may not align with policies enforced at the cloud access security broker. Access policies for remote users may not reflect the same logic as branch access policies. When these tools are updated independently, policy drift accumulates over time.

In a converged SASE platform, all policies are managed from a single interface. Updates to access policies, firewall rules, or security settings propagate consistently across every location and user. There is no policy drift because there is no separate stack to drift against.

In a hardware-based architecture, new security capabilities often require new hardware. Adding a dedicated DLP appliance means procuring, deploying, and configuring physical devices. Adding endpoint detection capabilities means another agent, another management console, another vendor relationship.

In a cloud-native platform, new capabilities are enabled through a subscription change and policy configuration. The infrastructure to run those capabilities already exists across the provider's global PoP networ.

Organizations that previously could not justify the operational complexity of deploying DLP can adopt it by adjusting their subscription and configuring policies in the same interface they already use. What previously required months of deployment effort becomes a matter of days.

Also Read: What is Security Service Edge (SSE)? A Safer Network

The practical impact of autonomous life cycle management is not simply that IT does less maintenance. It is that IT capacity is reallocated toward work that drives business outcomes.

IT teams in organizations that have moved to cloud-native SASE platforms consistently report that troubleshooting time decreases substantially. Because all network and security events are stored in a common data repository and monitoring tools are converged, identifying the root cause of a performance or security issue is faster and requires less cross-vendor coordination. The work that remains is more strategic: analyzing threat patterns, refining access policies, supporting business expansion, and evaluating new capabilities.

The management model itself can be adapted to fit the organization's preferences. Teams that want direct control retain it through a full-featured self-service portal. Organizations that prefer to delegate ongoing management can work with the SASE provider or a managed service partner. Either way, the underlying platform maintenance happens autonomously, independent of which management model the customer chooses.

Manufacturing and Industry 4.0

Smart factory deployments, IoT device proliferation, and the integration of operational technology with IT networks create an environment where the scope of what needs securing and managing expands continuously. Autonomous life cycle management means that new IoT-connected devices benefit from updated security policies and threat detection capabilities without triggering a hardware refresh cycle at the factory floor.

Retail and Multi-Location Operations

Opening a new store location in a traditional model involves ordering hardware, scheduling installation, configuring devices, and validating security policies before the location goes live. With zero-touch provisioning in a SASE platform, the hardware requirement at the branch is minimal. Plug in the socket device, connect to the platform, and the location inherits all current policies and capabilities automatically. Closing or relocating a store is equally simple.

Pharmaceuticals and Healthcare

Regulatory compliance in these sectors requires consistent security controls across all locations. Autonomous policy management ensures that a configuration change made centrally applies everywhere simultaneously, reducing the risk of compliance gaps that arise when manual update processes are inconsistently applied across a distributed estate.

The traditional model of enterprise network and security management was built around a set of assumptions that no longer hold. Users are distributed. Applications live in the cloud. The attack surface expands continuously. Managing this environment through a stack of discrete appliances that each require their own maintenance cycles, configuration management, and capacity planning has become structurally unsustainable for most organizations.

Autonomous Platform Life Cycle Management addresses the root cause of this challenge by shifting infrastructure maintenance from a customer responsibility to a platform function. Updates happen automatically. Scaling happens elastically. Self-healing ensures continuity without pre-planned intervention. New capabilities arrive through the existing platform rather than through new hardware deployments.

The result is that IT teams operate more effective security at lower total cost, with more time available for work that directly supports business objectives. For organizations evaluating their network and security strategy, understanding this shift is not simply about choosing a technology. It is about choosing a fundamentally different operating model for the infrastructure their entire business depends on.

Request a Cato SASE Platform Demo

No. IT retains complete control over policies, configurations, and access management. What changes is who is responsible for maintaining the infrastructure that runs those policies. The SASE provider handles platform-level maintenance. IT handles how the platform is configured and used.

When user volumes increase or new security capabilities are enabled that require more compute, the cloud infrastructure allocates additional resources dynamically. This happens at the provider's infrastructure layer and is transparent to the customer. There is no capacity planning required on the customer side, and no hardware purchase triggers.

Updates to a cloud-native SASE platform are designed to be non-disruptive. Configuration and policy settings are preserved across updates. Customers do not need to reconfigure their environments after the platform receives new capabilities or security updates.

It is particularly well suited to them. Organizations with smaller IT teams benefit most from removing the ongoing maintenance burden, since those teams have the least capacity to absorb it. Autonomous life cycle management allows a small team to operate a secure, globally distributed network without the staffing overhead that the same scope would require in a traditional architecture.

Yes. SASE providers typically offer self-service, co-managed, and fully managed models. In all cases, the underlying platform maintenance is handled autonomously. The management model choice relates to how policies and configurations are managed, not whether the platform receives updates and scales automatically.

Surbhi Suhane is an experienced digital marketing and content specialist with deep expertise in Getting Things Done (GTD) methodology and process automation. Adept at optimizing workflows and leveraging automation tools to enhance productivity and deliver impactful results in content creation and SEO optimization.

Share it with friends!

share your thoughts